Executive Summary

The rapid evolution of multi-agent AI systems has encountered a critical structural barrier: the lack of a disciplined memory architecture. While modern agents struggle with redundant computations, stale data, and conflicting states, the field of computer architecture offers a proven roadmap for solving these exact coordination problems. A landmark March 2026 position paper from UC San Diego argues that agent memory must transition from its current “ad-hoc chaos” to a formal three-layer hierarchy modeled on processor design.

Critical Takeaways:

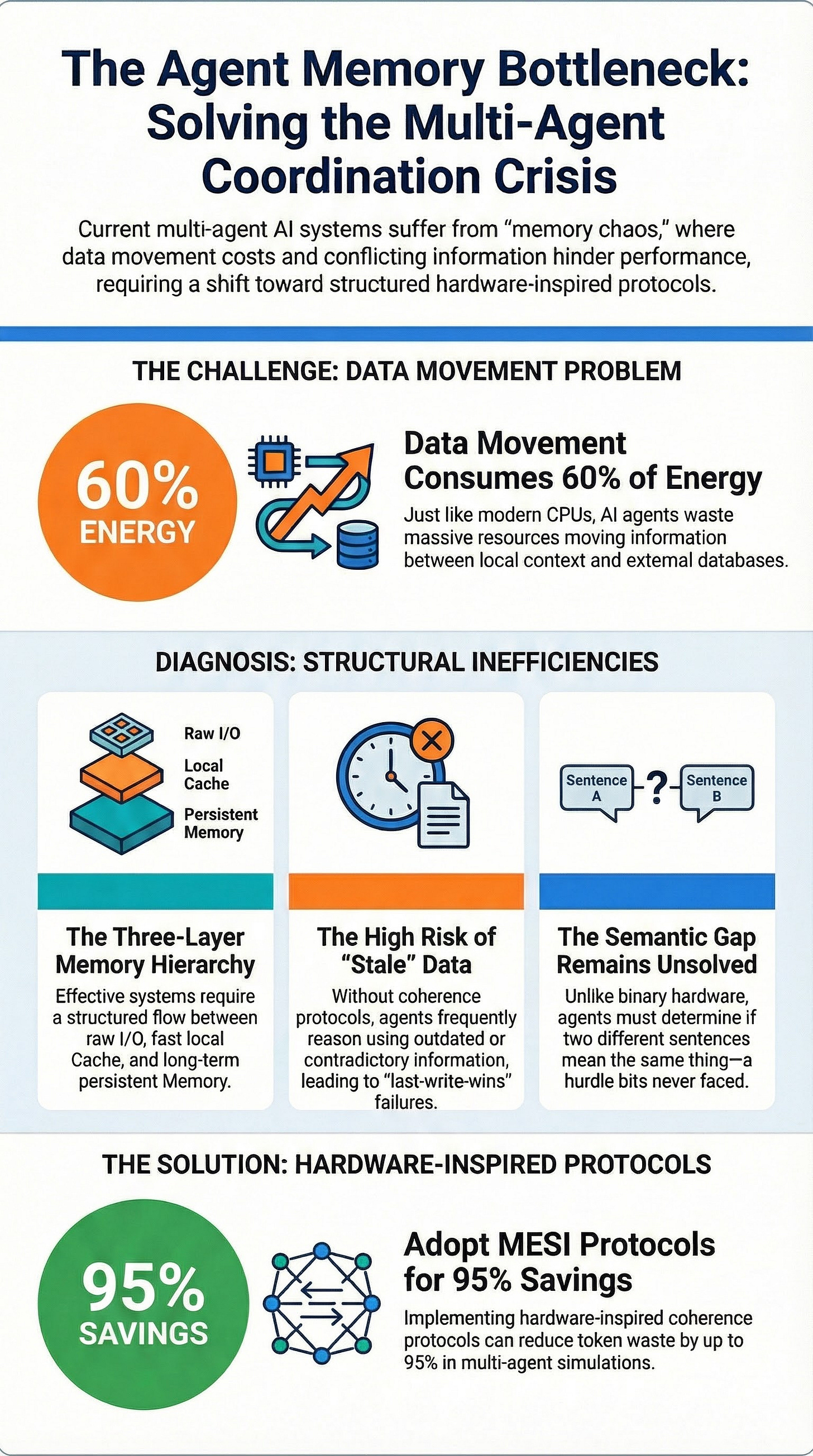

The von Neumann Bottleneck Resurfaces: Just as CPUs are limited by data movement between processor and memory, agents are throttled by the “expensive” movement of data between local context windows and distant knowledge stores.

Proven Solutions: Protocols developed for hardware (MESI, MOESI, NUMA) provide a blueprint for maintaining memory coherence and consistency, potentially reducing token waste by up to 95%.

The Semantic Gap: The fundamental challenge remains that hardware memory is binary (exact bit matching), while agent memory is semantic (natural language meaning). Current embedding similarity models are insufficient for identifying logical contradictions.

Emerging Standards: New frameworks like the Artifact Coherence System (ACS) and Kumiho are beginning to apply formal logic and hardware-inspired protocols to agent systems with promising results.

--------------------------------------------------------------------------------

The Three-Layer Agent Memory Hierarchy

Research from UC San Diego proposes a conceptual framework to organize agent memory into three distinct layers, mapping directly to classical computer architecture.

Layer

Hardware Analogue

Agent Equivalent

Current Examples

I/O Layer

Peripherals/USB

Raw data entry (audio, text, API calls)

Anthropic MCP, JSON-RPC

Cache Layer

L1/L2/L3 Caches

Compressed context, KV cache, recent tool calls

DroidSpeak, KV-Comm

Memory Layer

RAM/Disk

Long-term history, vector stores, knowledge bases

Letta (MemGPT), Mem0, MemoRAG

The core principle of this architecture is that agent performance is an “end-to-end data movement problem.” If information is trapped in the wrong layer, reasoning accuracy and cost-efficiency suffer.

Critical Missing Protocols

The UCSD paper identifies two specific gaps preventing this hierarchy from functioning efficiently:

Agent Cache Sharing Protocol: A mechanism allowing one agent to reuse another agent’s cached results, preventing redundant LLM recomputations.

Structured Memory Access Control: A formal definition of who can read or write to specific memory blocks (e.g., “agent RDMA” patterns).

--------------------------------------------------------------------------------

Lessons from Hardware: Coherence and Consistency

The hardware industry solved the “multiprocessor problem” (where different cores hold different values for the same address) through a series of sophisticated protocols.

The MESI and MOESI Models

Developed in the 1980s, these protocols ensure that a “load always returns the value from the most recent store.”

MESI (Modified, Exclusive, Shared, Invalid): This protocol ensures a Single-Writer/Multiple-Reader invariant. If an agent (core) writes to a shared block, it must invalidate all other copies first.

MOESI (Owned): An optimization that allows “dirty sharing,” where a cache can supply updated data directly to another core without waiting for a slow write-back to main memory.

Snooping vs. Directory: While small systems can “snoop” (broadcast every change), larger systems require a “directory” to track which agent holds which information to prevent bandwidth collapse.

Memory Consistency Models

While coherence focuses on a single location, consistency defines the order of operations across multiple locations:

Sequential Consistency: All agents see the exact same global order. This is intuitive but “disastrously slow.”

Release Consistency: Agents work independently and only synchronize at defined “acquire” (lock) and “release” (unlock) points. This is identified as the most natural fit for independent AI agents.

NUMA: The Affinity Analogy

Non-Uniform Memory Access (NUMA) architecture recognizes that local memory is faster than remote memory. This mirrors agent topology: an agent’s local context is “fast,” while a shared database is “slow.” Effective agent frameworks must adopt “memory affinity,” assigning tasks to agents that already have the relevant context to avoid “remote” access penalties.

--------------------------------------------------------------------------------

Analysis of Current Agent Memory Systems

Despite the availability of hardware-inspired solutions, current agent frameworks lack formal coherence mechanisms.

Letta (formerly MemGPT): Uses virtual memory-inspired paging but relies on “last-write-wins” for multi-agent updates. This leads to lost updates and silent data corruption if multiple agents edit the same block.

LangGraph & LangChain: Provide state persistence and transitions but require manual schema design for conflict resolution with no built-in coherence protocols.

CrewAI & AutoGen: Utilize basic serialization locks or centralized transcripts. Documentation acknowledges that isolated memory buffers often lead to “conflicting actions” as inconsistencies snowball.

MemRL: Focuses on single-agent utility through reinforcement learning but lacks cross-agent coordination mechanisms.

--------------------------------------------------------------------------------

The Semantic Gap: The Fundamental Challenge

The primary obstacle in translating hardware solutions to AI is that agent memory is natural language, not bits.

The Contradiction Problem

In hardware, checking if two values are the same is a bit-comparison. In agent systems, two memories may be semantically similar but logically contradictory (e.g., “The client likes warm tones” vs. “The client likes cool tones”). Standard embedding similarity often fails to distinguish these, as both phrases exist in the same “semantic neighborhood.”

Emerging Theoretical Solutions

SparseCL (2024): Uses contrastive learning to measure “Hoyer sparsity” in difference vectors to detect contradictions.

Kumiho (March 2026): Employs AGM Belief Revision, a formal theory for how rational agents update beliefs. It uses a graph-native architecture to propagate changes downstream when a core belief is revised.

The UCSD Perspective: Agent “writes” are speculative and may be wrong. Conflict resolution requires domain reasoning rather than simple timestamps.

--------------------------------------------------------------------------------

Breakthrough: The Artifact Coherence System (ACS)

Recent research by Vladyslav Parakhin (March 2026) demonstrates the first successful application of MESI protocols to agent systems via the Artifact Coherence System (ACS).

Design: A TLA+-verified protocol mapping MESI states to artifact authorization.

Performance: In simulations, ACS achieved token savings of 95.0% at low volatility. Even under extreme volatility (V=0.9), it maintained an 81% reduction in synchronization overhead.

Implementation: The system uses “lazy invalidation” and can be integrated into existing frameworks like LangGraph and CrewAI through thin adapter layers.

Scope: While it successfully synchronizes structured “artifacts” (documents, code), the author acknowledges that “fuzzy” semantic beliefs remain an open challenge.

--------------------------------------------------------------------------------

Conclusion: The Road to Engineering Discipline

The synthesis of computer architecture and agent memory marks a transition from “art” to “engineering.” The hardware analogy provides a roadmap—moving from ad-hoc shared memory to structured hierarchies and coherence protocols.

While the “semantic gap” prevents a 1:1 translation of hardware logic, the integration of structured knowledge representations (like knowledge graphs) and formal belief revision (like Kumiho) suggests a path forward. The next phase of agent development will likely rely on these architectural principles to ensure that AI systems are not just faster, but logically consistent and resource-efficient.