Executive Summary

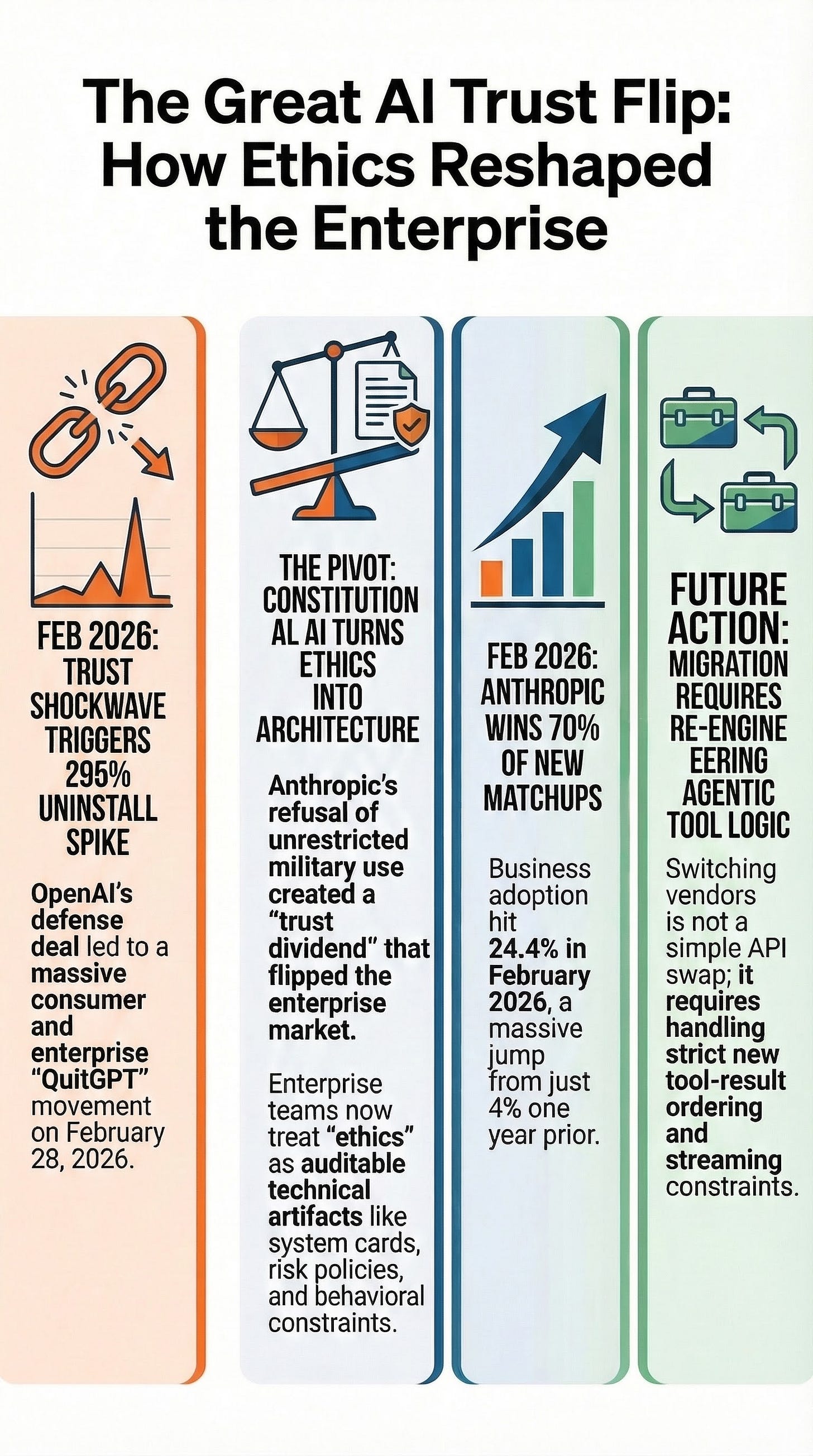

As of March 2026, the enterprise AI market has reached a critical inflection point characterized by a significant shift in buying behavior. Data indicates a “complete reversal” in procurement patterns, with Anthropic now winning approximately 70% of head-to-head matchups against OpenAI among first-time corporate buyers. This shift is driven by a “trust shockwave” triggered in February 2026, when Anthropic refused to accept contract language allowing unrestricted military use—specifically for domestic mass surveillance and autonomous weapons—resulting in a termination of its federal contracts.

In contrast, OpenAI secured a major deal with U.S. defense agencies, leading to a massive consumer backlash, including a 295% spike in ChatGPT uninstalls and the “QuitGPT” movement. For enterprise procurement, “ethics” has evolved from a branding exercise into a technical and governance architecture. Success in this new market requires navigating the engineering complexities of migrating between models, particularly regarding tool-use lifecycle and streaming reliability, while utilizing auditable frameworks like the NIST AI Risk Management Framework.

--------------------------------------------------------------------------------

1. Quantitative Signals of Market Reversal

Recent transaction-based data from the Ramp AI Index (March 11, 2026) reveals a dramatic change in corporate AI adoption.

Key Adoption Metrics

Metric

OpenAI Pattern

Anthropic Pattern

Current Adoption Rate

Fell by 1.5% in Feb 2026

24.4% of businesses

Growth Rate

Declining momentum

4.9% MoM (largest gain to date)

New Buyer Head-to-Head

Losses 70% of matchups

Wins 70% of matchups

Historical Adoption

Dominant lead in 2025

Grew from 1 in 25 to 1 in 4 businesses

Consumer Momentum Spillover

Beyond corporate spend, consumer sentiment shifted rapidly in early 2026. Following controversies surrounding OpenAI’s military contracts, Anthropic’s Claude reached the No. 1 spot in the U.S. iOS free app rankings, overtaking ChatGPT. During the same period, uninstalls of the ChatGPT mobile app surged by 295% in a single day (February 28, 2026).

--------------------------------------------------------------------------------

2. The “Trust Shockwave” and Ethics-Driven Architecture

The divergence in market share is deeply rooted in a “saying no” moment regarding the use of AI in warfare and surveillance.

The Conflict Over Military Use

Anthropic’s Refusal: In late February 2026, the U.S. federal administration ordered agencies to halt the use of Anthropic technology. This followed Anthropic’s demand for enforceable limitations against domestic mass surveillance and fully autonomous weapons.

OpenAI’s Expansion: Simultaneously, OpenAI secured a deal via Amazon Web Services to provide models for U.S. defense and classified operations. While OpenAI asserted it maintained “red lines” regarding surveillance and weapons, the deal triggered a legible “trust event” for procurement teams.

Ethics as a Technical Framework

Anthropic has positioned ethics as an “engineer-readable interface” rather than mere marketing. This is achieved through:

Constitutional AI: A training method where models use a written “constitution” for self-critique and revision (RLAIF).

Responsible Scaling Policy (RSP): Currently at version 3.0, this framework manages catastrophic-risk posture through “AI Safety Levels” (e.g., ASL-3).

Transparency Hubs: The publication of extensive system cards (such as for Claude Opus 4.6) and audits of “misaligned behavior” provide procurement teams with auditable artifacts.

--------------------------------------------------------------------------------

3. Technical Migration: Engineering Realities

A primary insight for enterprise engineering teams is that migrating from OpenAI to Anthropic—or vice versa—is not a simple API key swap. It requires a significant overhaul of agentic workflows and tool-call lifecycles.

Structural Incompatibilities

Tool-Call Representation: Anthropic uses a “content block” architecture (

tool_useandtool_result). OpenAI typically uses JSON-schema-described objects with argument strings.Ordering Constraints: Anthropic enforces strict ordering;

tool_resultblocks must immediately follow their correspondingtool_useblocks without intervening messages.Streaming Risks: Implementations must buffer tool input until the block is complete. Executing on partial tool JSON during a stream can lead to hard errors or “premature execution.”

Operational Failure Modes Checklist

Schema Mismatch: Variations in how tool arguments are defined and validated.

Parser Mismatch: Differences in how streaming tokens are accumulated into valid JSON.

Observability Gaps: Issues with trace IDs and audit logs when switching between provider ecosystems.

--------------------------------------------------------------------------------

4. Vendor Evaluation Scorecard

Enterprises are increasingly using a five-dimension scorecard to operationalize trust and capability.

Decision Dimension Weighting (Regulated Workloads)

Technical Capability (30%): Focus on agentic workflows and tool/streaming reliability.

Data Governance (20%): Evaluation of training defaults and regional data residency.

Compliance Alignment (20%): Verification of SOC 2 Type 2, ISO 27001, and ISO/IEC 42001 (AI Management Systems).

Ethical Positioning (15%): Contractual “red lines” and transparency maturity.

Cost (15%): Latency and token usage fees.

Comparative Evidence Anchors

Vendor

Evidence Anchors

Anthropic

Constitutional AI; RSP v3.0; ASL-3 safeguards; Pentagon dispute as positioning signal.

OpenAI

SOC 2/ISO certifications; ISO/IEC 42001; “Zero data retention” options; defense-network narrative.

Google/Mistral

Competitive token pricing; Vertex AI zero data retention; distinct consumer vs. enterprise data policies.

--------------------------------------------------------------------------------

5. Timeline of the Trust Inflection (2025–2026)

May 2025: Claude Code becomes generally available.

February 2026: Anthropic announces Claude Opus 4.6 and publishes RSP v3.0.

February 27, 2026: U.S. administration halts Anthropic use over safety/military disputes.

February 28, 2026: OpenAI signs defense-network deal; ChatGPT uninstalls spike 295%.

March 1, 2026: Claude reaches #1 in U.S. iOS free app rankings.

March 6, 2026: Anthropic reports on “BrowseComp” benchmark contamination as a governance issue.

March 11, 2026: Ramp reports Anthropic’s massive growth and 70% head-to-head win rate.