Executive Summary

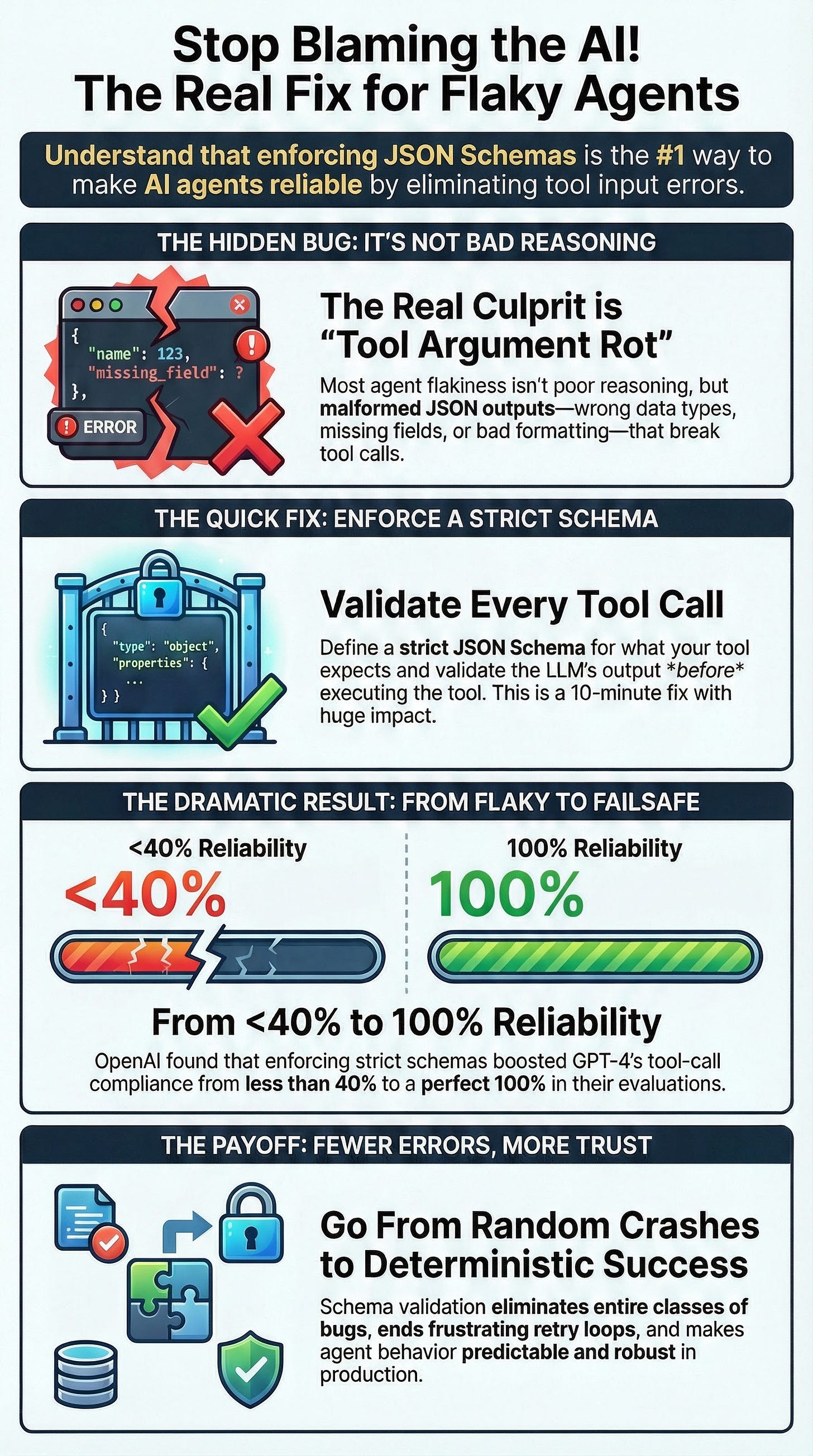

A significant portion of AI agent “flakiness” and production failures stems not from flawed reasoning but from “tool argument rot”—the generation of malformed JSON, missing fields, or incorrect data types when calling tools. This fundamental issue causes tool calls to misfire, leading to unpredictable behavior and spiraling error loops. The core solution is to shift from best-effort parsing to a deterministic approach by enforcing structured outputs validated against a strict JSON Schema for every tool action.

By implementing schema validation, the tool integration layer becomes robust and predictable. The agent either produces a perfectly formatted, valid tool call or it fails fast, eliminating ambiguous “close enough” attempts that introduce instability. This approach has a measurable and dramatic impact on reliability. OpenAI reported that enforcing a strict JSON schema increased output compliance from under 40% to 100% in its evaluations for a GPT-4 model. Teams in the community have seen similar results, with one reporting a 7x improvement in multi-step workflow accuracy.

This strategy is a high-impact, low-effort “quick win” that can be implemented in minutes per tool. As agent deployments stall in production due to reliability concerns, adopting schema validation is arguably the single most effective change to harden agent architectures, immediately addressing a primary source of bugs and instability.

--------------------------------------------------------------------------------

1. The Core Problem: “Tool Argument Rot”

A pervasive issue undermining the reliability of AI agents is “tool argument rot.” This term describes a class of errors where the agent’s output does not perfectly match the expected input format of a tool. This is a more frequent cause of failure than model hallucinations or poor reasoning.

Key Manifestations of Tool Argument Rot:

Malformed JSON: The output is not syntactically valid JSON, causing parsing errors (

JSONDecodeError).Missing Fields: Required arguments for a tool call are omitted.

Incorrect Data Types: A field is provided as a number when a string is expected, or vice-versa.

Silent Type Coercions: Data types are implicitly changed, leading to unexpected behavior within the tool.

These formatting errors cause tool calls to fail outright or, more dangerously, execute incorrectly. Many production failures diagnosed as the model “being dumb” are, in fact, simple formatting mismatches. This reliability gap is a significant factor in the observed industry-wide slowdown of enterprise AI agent rollouts, as organizations struggle to make them robust outside of controlled lab environments.

2. The Solution: Enforcing JSON Schemas on Tool Inputs

The most effective solution to combat tool argument rot is to enforce structured outputs by validating all tool inputs against a predefined JSON Schema. This approach treats the interface between the LLM and the tool as a strict API contract, making it nearly deterministic.

A High-Impact “Quick Win”

Implementing schema validation is considered a “quick win” due to its exceptionally high return on investment.

Minimal Effort: Adding a JSON schema validation layer to a tool can take as little as 5-10 minutes. This can be done by supplying a schema directly to the LLM API call (e.g., OpenAI’s function calling with

{"strict": true, ...}) or by using a validation library in the agent’s code.Immediate Impact: This single change eliminates an entire class of errors upfront. The agent either produces a correctly formed call or does not proceed, dramatically reducing failures and “weird behaviors.”

Reduced Complexity: It eliminates the need for complex, brittle error-handling loops and “fix the format” guardrail prompts that attempt to recover from malformed outputs.

As one practitioner noted, this approach is essential for production reliability: “agents will try to call tools with wrong parameters… We thought better prompting would fix this. It doesn’t. You need strict validation and recovery flows.”

3. Evidence and Validation of Effectiveness

The benefits of schema validation are supported by authoritative guidance, community experience, and empirical data.

Authoritative and Foundational Support

Official Guidance: OpenAI’s documentation strongly advocates for using Structured Outputs with JSON Schemas to improve reliability. The company evolved its offerings from a basic “JSON mode” to true schema enforcement in mid-2024 precisely because developers were struggling with format errors.

Software Engineering Principles: The practice aligns with established principles of robust system design. Defining and validating against a clear API contract is a cornerstone of software engineering, analogous to using input validators for web forms or type checkers in code.

Community and Real-World Evidence

Common Failure Mode: Community forums and GitHub issue trackers are filled with examples of agent failures caused by invalid tool arguments, leading to exceptions like

SyntaxErrorand endless loops.Brittle Anti-Patterns: Historically, many agent frameworks and tutorials demonstrated fragile approaches, such as manually parsing JSON with

json.loads()and checking for keys withif key in obj, which fails to handle a wide range of formatting edge cases.

Measurable Success Metrics

The primary metric for success is the tool-call failure rate, which should drop to low single digits or zero after implementation.

OpenAI Evaluation: A new GPT-4 model using structured outputs achieved 100% compliance with its output schema, compared to less than 40% for an older model without this enforcement.

Community Case Studies:

A financial services team reduced its data ingestion error rate from approximately 5% to under 0.3% by adding schema validation.

An engineering team achieved a 7x improvement in accuracy (from 10% to 70% success) on a multi-step workflow by introducing structured validation loops.

Secondary Benefits:

Fewer retry loops.

Shorter debugging cycles with clear schema validation errors.

Higher confidence in scaling systems with multiple tools and agents.

A simple experiment comparing 20 agent runs before and after schema validation can starkly illustrate the improvement, with failure rates dropping from a significant percentage (e.g., 30%) to nearly zero.

4. Implementation: Best Practices and Advanced Techniques

From Anti-Pattern to Best Practice

The Anti-Pattern (To Avoid): Manually parsing JSON and using conditional checks (

if 'key' in data) to handle missing or malformed data. This approach is fragile, patching holes reactively rather than preventing them, and can lead to silent failures where a tool is called with invalid data (e.g.,None).The Best Practice (To Adopt):

Define a strict JSON Schema that precisely describes the tool’s expected input, including data types, required fields, and setting

"additionalProperties": falseto disallow extraneous fields.Use a validation library (like

jsonschemaorPydanticin Python) to validate the agent’s output against the schema.Fail Fast: If validation fails, reject the tool call immediately and return a clear error. This forces the agent to correct its output before any action is taken. Only execute the tool call if the data is guaranteed to be valid.

The “Pro Move”: Layering Semantic Validation

Once structural validation is in place, the next step is to add semantic validation to enforce domain-specific business rules that a JSON Schema cannot express.

Concept: After the schema confirms the format is correct, custom logic checks if the content makes sense in context.

Example: A

transfer_funds(amount, account)tool’s schema can ensureamountis a number. Semantic validation can add a rule thatamountmust not exceed $50 unless anoverride_approvedflag is true.Benefit: This hardens the tool interface further, ensuring not just correct JSON but also correct intent. It elevates reliability by forcing the agent’s actions to stay within safe, predefined bounds.

5. Common Mistakes to Avoid

To maximize the benefits of schema validation, it is critical to avoid common pitfalls that can reintroduce ambiguity.

Using Loose Schemas in Critical Places: For tools that perform writes or state-changing actions (e.g., database updates, transactions), schemas must be strict. Setting

"additionalProperties": trueis discouraged, as it allows the model to include unexpected fields, undermining the guarantee of a precise contract. The goal is certainty: “if it passed, it has exactly what I expect, nothing more, nothing less.”Silently Correcting Invalid Outputs: Do not automatically fix or coerce an agent’s invalid output. Doing so can mask underlying issues and lead to the agent performing unintended actions. The better approach is to fail loudly by returning a specific validation error to the agent (e.g., “Invalid tool arguments: missing

customer_id“). This allows the model to learn and correct its own output, preserving transparency and determinism.Neglecting Schema Maintenance: Schemas are part of a tool’s API contract and must be versioned and updated as tool requirements evolve. An outdated schema can cause validation to fail on newly valid inputs or miss new types of invalid inputs. A mismatch will be caught immediately, preventing silent failures, but schemas must be managed intentionally as part of the development lifecycle.