Executive Summary

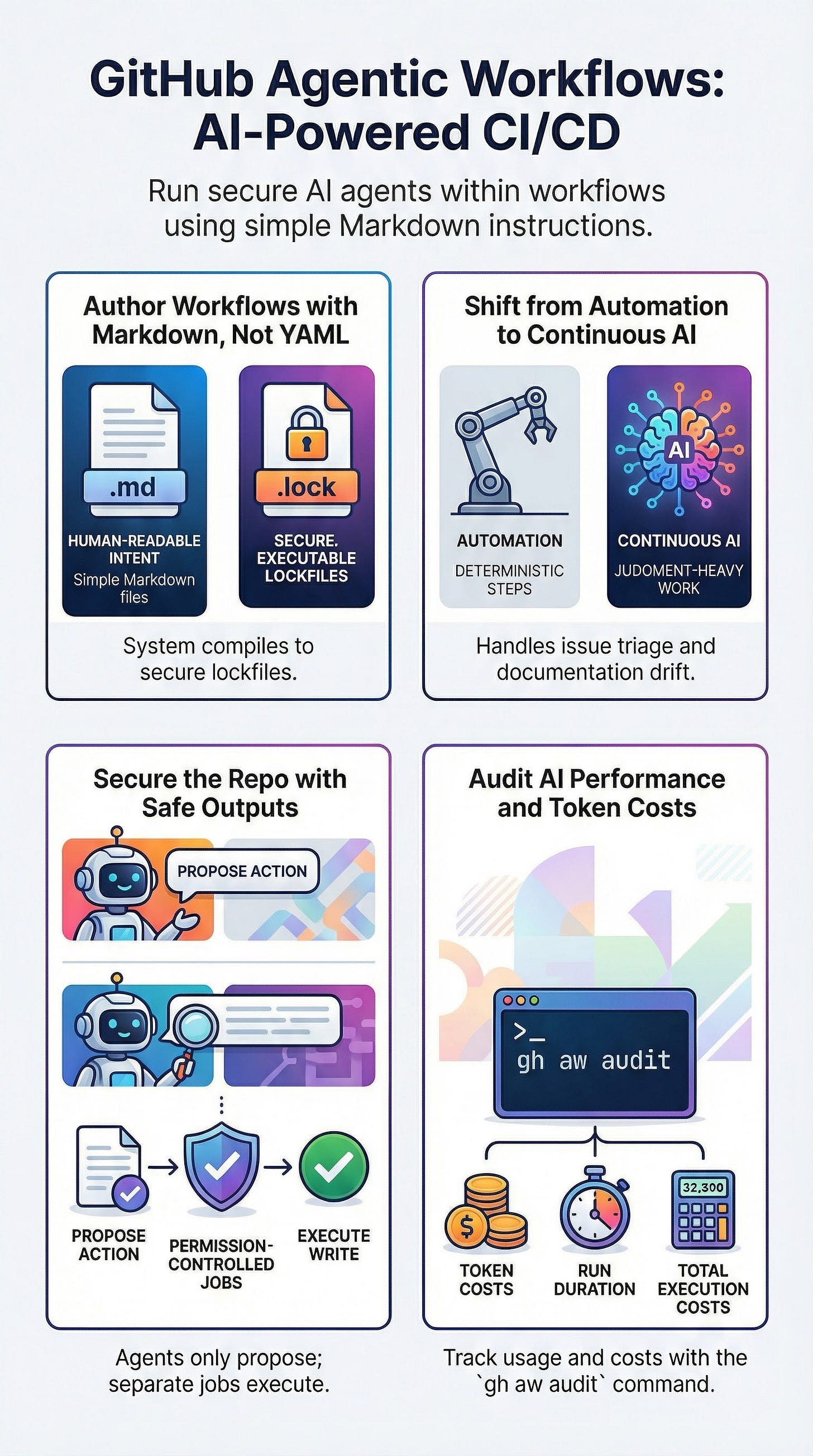

GitHub has introduced Agentic Workflows, currently in technical preview (announced February 13, 2026), marking a shift from deterministic CI/CD toward “Continuous AI.” This capability allows developers to run AI coding agents within GitHub Actions to handle judgment-heavy repository tasks—such as issue triage, security reviews, and documentation maintenance—using natural language instructions.

The system is built on a “security-first” architecture that separates AI “intent” from execution. Key features include a Markdown-based authoring experience, a hardened compilation process that generates lockfiles, and a “Safe Outputs” mechanism that prevents agents from performing direct, ungated writes to a repository. While positioned as an augmentation of traditional CI/CD rather than a replacement, Agentic Workflows represent a significant move toward integrating autonomous reasoning into the Software Development Life Cycle (SDLC).

--------------------------------------------------------------------------------

The Vision: Transitioning to Continuous AI

GitHub defines Continuous AI as the integration of AI into the SDLC to automate tasks that previously required human judgment. Unlike traditional CI/CD, which relies on deterministic build and test steps, Agentic Workflows focus on non-deterministic repository work.

Key Strategic Pillars

Augmentation, Not Replacement: As noted by Eddie Aftandilian, the system is designed to complement existing CI/CD pipelines, not replace them.

Scalability in Action: A primary use case cited is Franck Nijhof’s use of the system to analyze thousands of open issues in the Home Assistant repository, moving AI beyond “toy demos” into enterprise-scale repository management.

Human-in-the-Loop: The system emphasizes “reviewable gates,” ensuring that while the agent can “think” and “propose,” humans remain responsible for final actions like merging code.

--------------------------------------------------------------------------------

Technical Architecture and Mechanics

The fundamental innovation of Agentic Workflows is the separation of human-readable instructions from the machine-executable security configuration.

The Markdown and Lockfile Model

Source of Truth (Markdown): Workflows are authored as

.mdfiles in.github/workflows/. These files contain YAML frontmatter (triggers, tools, permissions) and a Markdown body that serves as the “operating procedure” or prompt for the agent.Hardened Executable (Lockfile): Developers use the CLI command

gh aw compileto generate a.lock.ymlfile. GitHub describes this lockfile as the security-hardened artifact that actually runs within GitHub Actions.Dynamic Instruction Loading: At runtime, the system loads Markdown instructions dynamically. This allows for prompt tweaking without recompilation, though changes to permissions or tools require a fresh compile of the lockfile.

Tooling and Engines

The system is designed for “engine portability,” supporting multiple AI backends:

Supported Engines: Copilot CLI, Claude Code, and Codex.

CLI Integration: The

gh-awextension provides the core interface for compiling, running, and auditing workflows.

--------------------------------------------------------------------------------

Security and Defense-in-Depth

Security is the primary constraint of the Agentic Workflow architecture, specifically designed to mitigate risks like prompt injection and unauthorized data exfiltration.

Core Security Mechanisms

Mechanism

Function

Least Privilege

Workflows are read-only by default. Developers must explicitly grant narrow permissions for specific tasks.

Safe Outputs

Agents cannot perform write operations directly. Instead, they request “Safe Outputs”—pre-declared operations (e.g., adding a label, posting a comment) executed by separate, permission-controlled jobs.

Agent Workflow Firewall (AWF)

Fences network egress via a proxy with a domain allowlist to prevent data exfiltration.

Lockdown Mode

Automatically enabled for public repositories; it limits the data returned to the agent to content only from users with push access, reducing exposure to untrusted input.

--------------------------------------------------------------------------------

Implementation Patterns

The technical preview suggests three progressive patterns for deploying agentic automation:

1. Issue Triage (Auto-Labeling)

Objective: Automatically categorize new issues based on type (bug, feature) and priority (high, medium, low).

Safety Posture: Uses read-only repository access; writes are restricted to

add-labelsandadd-commentSafe Outputs.Constraints: The agent is forbidden from inventing labels and must only use those existing in the repository.

2. PR Security Review (ChatOps)

Objective: Conduct an automated security pass on Pull Requests triggered by a slash command (e.g.,

/security-review).Functional Focus: Identifies risks such as SQL injection, auth/session mistakes, secrets exposure, and SSRF.

Output: Generates line-level comments and a summary risk rating (Safe, Needs Attention, or High Risk).

3. Documentation Maintenance

Objective: Prevent “docs drift” by identifying outdated READMEs or API docs following a merge to the main branch.

Safety Posture: The agent edits files in a workspace but can only propose changes via a

create-pull-requestSafe Output, ensuring human review before any documentation is updated.

--------------------------------------------------------------------------------

Measurement and Observability

GitHub provides built-in tools to transition agent management from “magic” to measurable engineering.

Metrics for Success

Setup Time: Target installation and first run within minutes.

Latency: Monitoring duration from Action start to conclusion across different AI engines.

Accuracy: Evaluated via True Positives, False Positives (harmless patterns flagged), and “Actionability” of the agent’s suggestions.

Cost Management: Detailed token usage and costs are available via the platform.

Essential CLI Commands for Auditing

gh aw logs: Analyzes runs and captures performance metrics.gh aw audit <run-id>: Provides a deep dive into detailed token usage and specific execution costs.gh aw run: Manual execution for testing and troubleshooting.

--------------------------------------------------------------------------------

Critical Warnings and Pitfalls

While powerful, GitHub emphasizes that Agentic Workflows are in early development and should be used “at your own risk.”

Prompt Injection: A persistent risk, especially in public repositories where external users can provide untrusted input via issues or comments.

Lockdown Mode Surprises: Maintainers may find that triage workflows “miss” issues from external contributors due to automatic security restrictions.

Reliability: System reliability is a function of prompt quality and tool design, not just the underlying AI model. Continuous iteration on the “engineering artifact” (the workflow) is required for production-like performance.