Executive Summary

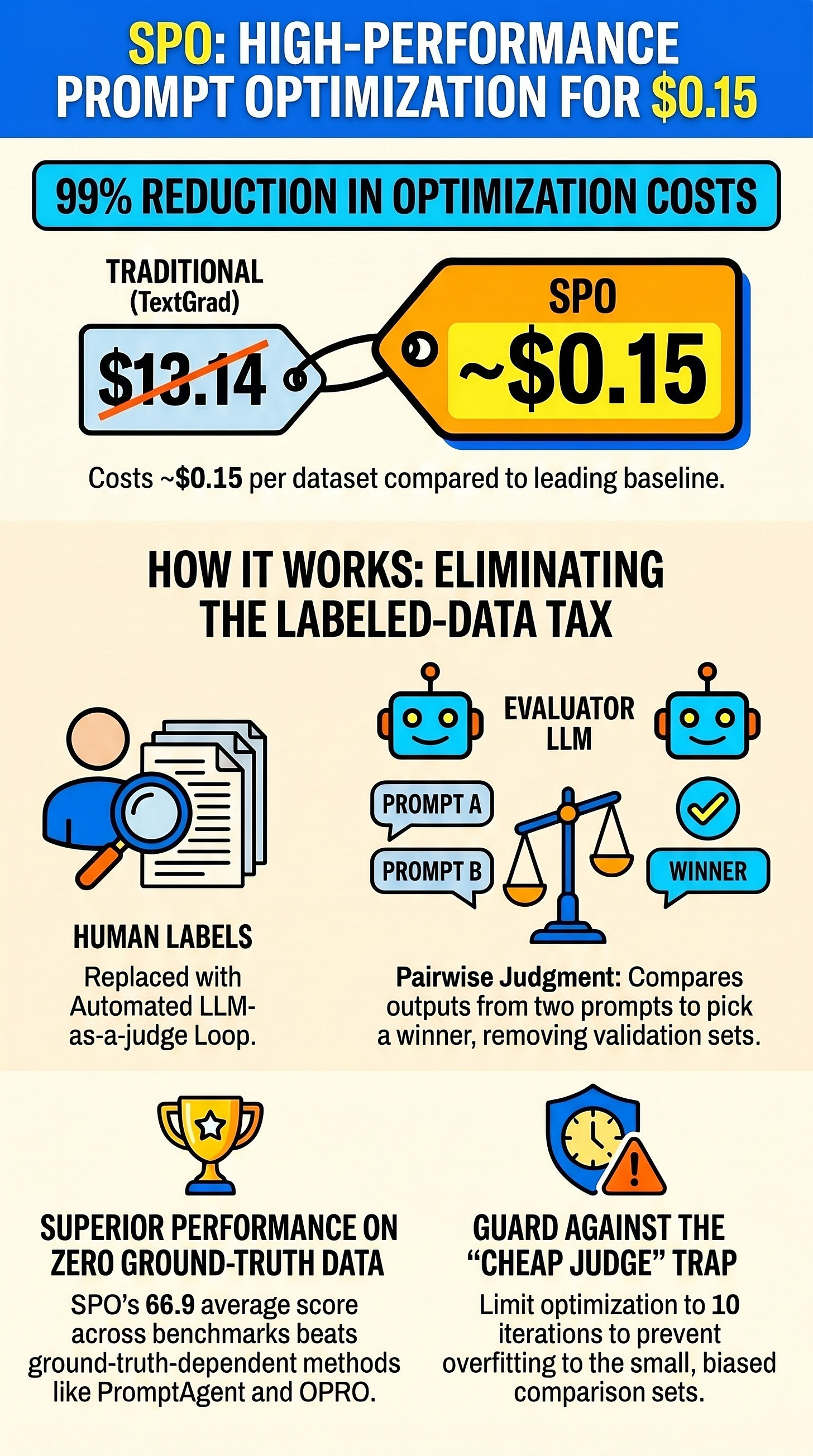

Self-Supervised Prompt Optimization (SPO) represents a paradigm shift in automated prompt engineering by eliminating the “labeled-data tax”—the requirement for ground-truth validation sets and manual metrics. By replacing traditional answer keys with an LLM-based pairwise evaluator, SPO achieves performance levels comparable to or exceeding state-of-the-art methods while reducing optimization costs by approximately 94% to 99%. While the method excels in tasks involving reasoning and surface-level correctness, it faces technical challenges regarding judge noise, overfitting to small sample sets, and “prompt hacking.” The significance of SPO lies not as a final solution, but as the foundational algorithm that established the viability of label-free optimization, spawning a new research thread focused on making autonomous feedback loops more reliable.

--------------------------------------------------------------------------------

Core Methodology: Transitioning to Label-Free Optimization

The primary innovation of SPO is the removal of the requirement for ground-truth labels. Traditionally, prompt optimizers (such as TextGrad, OPRO, and DSPy) rely on running candidate prompts against labeled examples and scoring them using metrics like F1 or exact match.

SPO replaces this framework with a self-supervised pairwise comparison loop:

Pairwise Evaluation: The system runs two prompts—the current “incumbent” and a “challenger”—on the same set of unlabeled questions.

The LLM Judge: An evaluator LLM reviews the two outputs and picks which one better satisfies the task instructions.

Binary Signal: Unlike methods that return calibrated reward scores, the SPO judge provides a binary flag (accept or reject). If the challenger wins, it becomes the new incumbent.

Recursive Optimization: The optimizer LLM uses the incumbent prompt and its successful outputs as raw material to generate the next candidate prompt.

The Optimization Loop Mechanics

SPO operates on a minimalist budget compared to its predecessors:

Iteration Volume: Typically 10 rounds.

Sample Size: 3 unlabeled questions per round, randomly sampled.

Call Volume: Approximately 8 LLM calls per iteration.

--------------------------------------------------------------------------------

Performance and Economic Impact

The most significant contribution of SPO is the drastic reduction in API call costs. By operating on a “tiny budget” of iterations and samples, it achieves a different deployment economics for prompt engineering.

Comparative Cost and Performance Analysis

Method

Average Cost (USD)

Average Score (Closed Benchmarks)

SPO

$0.15

66.9

OPRO

$4.51

66.6

PromptAgent

$2.71

65.0

PromptBreeder

$4.82

64.5

TextGrad

$13.14

63.9

APE

$9.07

N/A

Note: Benchmarks include GPQA, AGIEval-MATH, LIAR, WSC, and BBH-Navigate.

The data indicates that SPO costs between 1.1% and 5.6% of ground-truth-dependent methods while maintaining a higher average score across tested datasets.

--------------------------------------------------------------------------------

Technical Limitations and Risk Factors

Despite its efficiency, SPO introduces specific vulnerabilities inherent to self-supervised LLM loops.

1. Overfitting and Performance Degradation

The document highlights a “failure mode” where performance improves in early rounds but begins to degrade if optimization continues too long (typically beyond 10 iterations). This is attributed to the optimizer becoming “too good” at winning on the small, shifting sample set (3 questions per round) at the expense of general performance on broader, held-out benchmarks.

2. Judge Weaknesses

Noise and Bias: Pairwise evaluations are susceptible to position and presentation bias. While SPO uses randomized evaluation rounds to mitigate this, the bias cannot be entirely eliminated.

Circularity: If the same model family acts as both the optimizer and the judge, the “improvements” may merely be artifacts of that model family’s specific preferences rather than objective task success.

Prompt Hacking: Automated systems may optimize for the evaluator’s specific rubric in a “brittle, misaligned way.” This results in prompts that satisfy the judge but do not necessarily improve task outcomes.

3. Diminishing Returns with Complexity

Research suggests that while automated prompt engineering helps significantly with simpler tasks, gains diminish as task complexity grows. This is particularly true for:

Multi-step reasoning.

Tool-using settings.

Tasks requiring hidden states or latent constraints not visible in the final output.

--------------------------------------------------------------------------------

Evolution of the Field: Post-SPO Research

In the year following SPO’s release, the research community has diverged into two primary paths to address the “proxy metric” problem.

Direction A: Enhanced Signal Quality

Methods like GEPA argue that language is a richer learning medium than binary rewards. These systems use “reflective textual feedback” from execution traces, reporting significant improvements (e.g., +12 points on AIME-2025) over previous iterations.

Direction B: Advanced Search and Statistics

Methods like PDO (Pairwise Dueling-bandit Optimization) and PrefPO treat the comparison as a statistical problem:

PDO: Reframes the loop as a “dueling-bandit problem” and uses cross-family validation (using different LLMs to judge) to ensure improvements are not model-specific artifacts.

PrefPO: Focuses on reducing “prompt hacking” vulnerabilities, reporting much lower rates of brittle optimization compared to systems like TextGrad.

--------------------------------------------------------------------------------

Final Synthesis: Strategic Implications

SPO’s primary legacy is the transformation of label-free prompt optimization from a theoretical goal into a reproducible algorithm. However, its application requires specific strategic considerations:

Task Alignment: SPO is most effective for tasks where “better output” is clearly visible on the page (e.g., reasoning quality, rule-following, and surface-level correctness). It is more dangerous for tasks with hidden or long-horizon objectives.

The Proxy Trap: The fundamental challenge remains “keeping the optimizer honest.” Because SPO optimizes against an LLM judge rather than a ground-truth key, there is a constant risk that the target of the optimizer will diverge from the actual task.

Validation Requirement: Successful deployment requires validating the optimizer’s results against the thing the user “actually cares about,” rather than just the metrics the optimizer can see.

In summary, SPO proves that labels are no longer a strictly necessary cost for prompt optimization, provided the user accepts the trade-off of managing a more complex and potentially deceptive feedback loop.